If your application needs to be available 24-7 then you must design for high availability. Designing for high availability means that you understand how design choices can help you maximize application availability and how testing can validate that the application meets its high availability requirement.

All applications are typically available for usage at least some of the time, but Web-based or business-critical applications are expected to be available round-the-clock. In general, availability is not easy to implement and typically requires a more complex architectural infrastructure than the previous generation of client-server applications.

Applications can generally be divided into three categories with respect to availability:

An application may not be available for the following reasons:

In general, 80% of these failures are software-related, 10% are hardware-related, and the remaining 10% are due to environmental and other miscellaneous problems.

Availability can be quantified as a percentage calculation based on how often the application is actually available for use when compared to the total, planned available runtime. The calculation for availability uses the following measures:

| Name | Acronym | Calculation | Definition |

| Mean Time Between Failure | MTBF | Hours / Failure Count | Average length of time the application runs before failure. |

| Mean Time to Recover | MTTR | Repair Hours / Failure Count | Average length of time needed to repair and restore service after a failure. |

Therefore,

![]()

The sections that follow discuss designing, testing, and best practices for creating a highly-available distributed application.

To decide what level of availability is appropriate for your application, you consider the following questions:

Designing for availability is difficult. Because of the wide variety of application architectures, no single availability solution works for every situation. For example, the decision to employ a comprehensive, fault tolerant, fully redundant. load-balanced availability solution may be suitable for a business- or mission-critical application, but for an application that can accept some down times. Ultimately, the availability design for an application will depend on a combination of business-specific requirements, application-specific data, and the available budget. Having said this, what is a good availability number for different kinds of applications? The following table gives an approximate idea:

| Category | Failure Count per Year | Downtime per Year (Hours) | Average Time to Repair (Hours) | Availability |

| Non-commercial | 10 | 88 | 10 | 99.00% |

| Commercial | 5 | 44 | 9 | 99.50% |

| Business-Critical | 4 | 9 | 2.25 | 99.90% |

| Mission-Critical | 4 | 1 | .15 | 99.99% |

To consider what these numbers imply, consider the scenario of upgrading a non-commercial application to become a mission-critical application:

As a starting point, your non-commercial application fails 10 times each year, giving a total downtime of about 88 hours per year. This is a typical application. It runs most of the time, and when it fails, some skilled staff identify the problem, devise a fix, restore data and restart the application.

A new business strategy requires that the application be upgraded to commercial standards. The first step is to apply some architectural analysis and re-engineer some components to reduce the failure count by half to 5. For example, if some of these errors were due to poor error handling, you re-engineer the error handling process and even resolve a few recoverable error conditions. You also look at the supporting infrastructure used by your application. Even by reducing the failure count to 5, it still means that you have to resolve errors in under 9 hours. You decide to shorten the repair time by creating a trouble-shooting document and provide hands-on failure training.

Making the transition from commercial availability at 99.50% to business-critical availability at 99.90% is much more difficult. Assume that with intensive analysis and component re-engineering you are able to reduce the failure count to just 4 failures per year. But the down time must still be reduced from 44 hours to just 9 hours a year (equivalent to 80% reduction). This is where industrial-strength availability engineering becomes critical.

Making the transition from commercial availability to business-critical availability requires full commitment to reliability/availability culture. This takes the form of staff training, rigorous quality software engineering practices, appropriate certifications and the right technologies. To begin, you may decide to:

Reducing recovery time is now critical. You may decide to:

Moving your application to mission-critical availability means that the application must perform its services with only 1 hour downtime in a single year. Achieving this availability is non-trivial especially when all errors must be resolved in 15 minutes (or less.)

The main technique for increasing availability is redundancy. This means you may have to:

Where reliability is concerned with the question "Does it work?", availability is concerned with the question "How long does it take to fix?" Designing for availability is about anticipating, detecting, and automatically resolving software/hardware failures before they result in service errors.

While availability engineering is about reducing unplanned downtime, reducing planned downtime is also important. Such planned downtime may include maintenance changes, OS upgrades, backups, or any other activity that temporarily disables the application.

The following topics discuss some some availability design ideas:

Avoid these traditional method of providing high-availability:

While these traditional methods still have uses and may be quite effective in certain cases, there are newer approaches to availability that make use of reduced hardware costs and advances in distributed computing architecture.

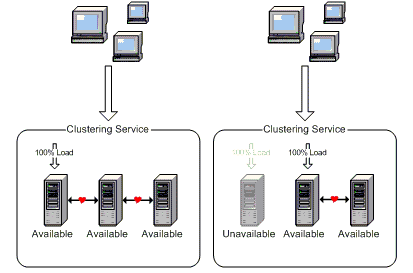

Clustering is the premier technology for creating high-availability applications. What is clustering? Clustering is linking a group of independent systems so that they work together as a single system. Clustering is about linking many physical servers such that if one fails, the running application is swapped over to another server and continues running as if nothing has happened. Under Microsoft Windows, clustering is hardware independent and additional servers can be added to handle increases workload. A client interacts with a cluster as if the cluster is a single server even through in reality a cluster is a collection of independent servers.

A cluster consists of multiple servers that are physically networked together and logically connected using clustering software. The clustering software allows these independent servers to act as if there were a single server - in the event of failure (CPU, Disk IO, memory storage, network card, application component, etc), the workload is transparently moved to another server, current client processes are switched over, and the failed service restarted. This is done all automatically with no apparent downtime.

In general, cluster software can provide failover support for applications, file and print services, databases, and messaging systems.

To take advantage of clustering, an application generally needs to:

The following figure illustrates clustering before and after a server has failed:

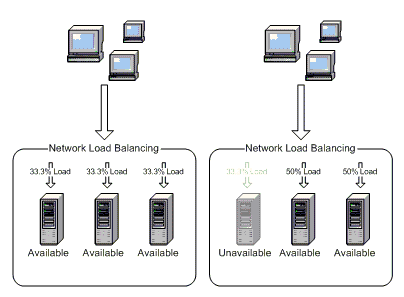

Network load balancing is the other premier technology for creating high-availability applications (clustering being the other premier technology). NLB is intended to distribute traffic across a cluster of servers allowing multiple machines to appear as a single server to clients. NLB increases availability by distributing the work load if a server in the cluster fails. The following figure illustrates NLB before and after a server has failed:

As illustrated above, NLB automatically detects a failed server and redirects client traffic to the remaining functioning servers - all the time maintaining continuous unbroken client service. NLB is very important for creating high-availability applications:

Continuous application service

Customer's experience will not be interrupted with unplanned server

downtimes. Workload will be automatically distributed when one of the

cluster servers fail.

Incremental server additions

You can add servers to the cluster one-at-a time, avoiding expensive initial

costs for creating high-available applications. Cluster changes immediately

cause automatic redistribution of workload

.

Offline maintenance

Servers within the cluster can be individually taken off-line without

affecting availability.

RAID stands for Redundant Array of Independent Disks. RAID is a way to use multiple hard disks so that data is stored in multiple places. The benefit of RAID is that any disk failure automatically transfers control to a mirrored or reconstructable data image while allowing the application to run uninterrupted. The failed disk(s) can then be replaced with no interruption to the application. RAID provides one of the cheapest methods for increasing data-access fault-tolerance.

One of the best ways to avoid planned downtime is to use rolling updates. For example, if you need to update a component on a cluster server, simply move the server's resource groups to another server, take the server offline for maintenance, perform the update, and then bring the server online. During the server's downtime, other cluster servers handle the workload and the application experiences no downtime.

A high-availability application should not be risked by other applications. For missions critical applications it is extremely important that dependencies on data and system components from other applications be eliminated by using entirely separate physical backbone for each mission critical application. 'Using entirely separate physical backbone' means for example, not sharing a database, not sharing the same network infrastructure, not sharing the same front-end or back-end servers, and so on.

There are also other physical isolation techniques ranging from application-centric thread isolation to built-in latency using queues to limit external system dependency.

With queuing, an application communicates with other applications by sending and receiving asynchronous messages. When compared to synchronous messaging, queuing offers a very useful strategy for guaranteed delivery - this is because it does not matter whether or not the necessary connectivity currently exists. Queuing is very is very useful during periods of large workloads, the could otherwise stress the system and possibly cause failures.

The immediate benefit is that queuing removes a point of failure from your application. Queuing therefore improves availability by increasing the number of routes available for successful message delivery.

A Distributed File System is a logical file structure applied to multiple server and file shares. DFS improves availability by being able to point to redundant file copies and hence increasing the likelihood of accessing a needed file - even if the primary datastore is down.

Testing for availability means running the application for a predefined period of time while collecting information about failures and time to repair them. This information is then used to calculate availability levels and compare them with the original or predicted availability levels.

From the above it can be seen that availability testing is primarily concerned with measuring and minimizing actual repair time, compared with reliability testing which is primarily concerned with finding defects and reducing the number of failures. It is worthwhile considering the availability formula again:

![]()

As the Mean Time To Recover (MTTR) goes to zero, percentage availability goes to 100%. This is the essential idea of availability testing: reduce and eliminate downtime. Towards reducing and eliminating downtime, remember that a software defect found after deployment is generally costs ten time more to fix than if found before deployment.

The following is a collection of testing concepts that are especially relevant to creating highly-available applications:

Availability engineering is all about delivering application services in spite of failures. The following best practices are recommended for creating highly-available applications: