Scalability

Summary

The key characteristic of a scalable application is that additional load only

requires additional resources rather than extensive modification of the

application itself. Therefore, scalability is the ability to add resources to

yield a linear increase in service capacity.

Scalability must be part of the design process because it is not a feature

that can be added later or during deployment. The decisions that you take during

the design phase and early coding phases will largely dictate the scalability of

your application. However, note that salability is not a design concern of

stand-alone application, but rather it concerns distributed applications.

Distributed applications are a step beyond client-server applications because

distributed applications are designed as n-tier applications. Such distributed

architecture promotes the design of scalable application by sharing resources

such as business and database components.

Scalability requires a balancing act between software and hardware. For

example, building a network-load-balanced cluster of application servers will

not benefit a client application if server-side code was written to run on a

single server (see location affinity rather then location transparency.)

Likewise, writing a highly scalable application and then connecting it to a low

bandwidth network will not handle heavy loads when traffic saturates the

network.

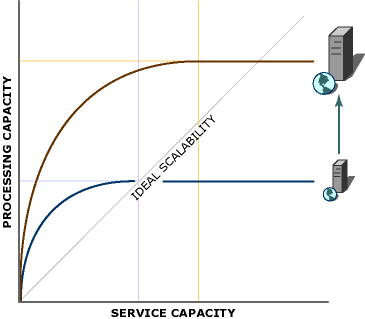

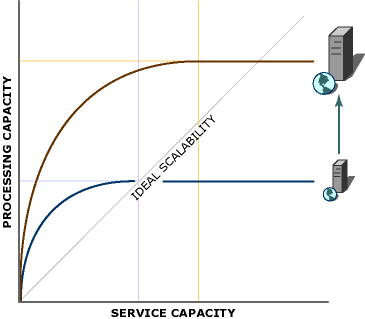

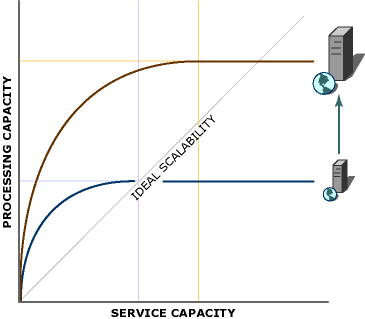

Scaling Up

Scaling up means achieving scalability by using bigger and faster hardware.

Bigger and faster hardware means adding more memory, adding more processors,

replacing old processors with faster one. Scaling up typically allows for an

application to increases its capacity without having to change code.

Administratively, things also remain the same as there is still one machine to

administer. Scaling up is summarized below:

Note that scaling up does no increase capacity linearly. Instead, the

performance gain curve slowly reaches its limit as more resources are added. For

example, adding four processors to server does it not increase capacity to 400%

over the unprocessed version. Synchronization between these processors as well

as contention over a single memory-bus will result in a lower performance gain.

Once you have upgraded each hardware component to its maximum limit, you will eventually

reach the real limit of the machine's processing capacity. At that point, the

next step in scaling up is to move to a bigger and faster machine.

Scaling up also presents another potential problem. Using a single machine to

support an application creates a single point of failure, and this greatly

diminishes the system-availability.

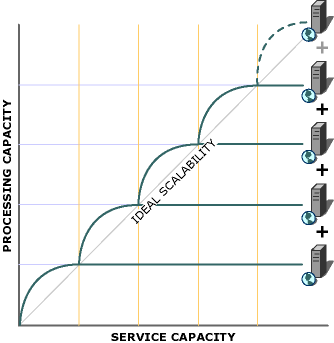

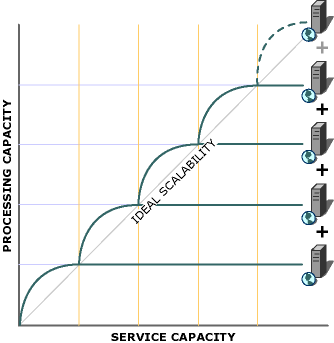

Scaling Out

Scaling out is an alternative to scaling up. Scaling out is about

distributing the processing load over multiple machines. Although scaling out is

achieved using multiple machines, these machines will essentially share load and

function as a single server. Scaling out is summarized below

Also note that by dedicating several machines to a common task, application

fault tolerance is increased. However, from an administrator's point of view,

scaling out presents greater management challenges due to the increased number

of servers.

Obviously, configuring multiple servers to share load from clients requires

special software. With Windows, you can use a variety of techniques including

clustering and load-balancing. Load-balancing allows a collection of servers to

scale out across a cluster of servers. Load-balancing also provides redundancy

allowing the collection of servers to remain available to users even if one or

more servers fail.

The key to successful scaling out is location transparency (as opposed to

location affinity) - if any of the application code depends on knowing what

server is running the code, location transparency has not been achieved and

scaling out will be difficult. This situation is called location affinity. If

you design an application with location transparency in mind, then scaling out

becomes an easier task.

Primary Design Goal: efficient resource management. Contention for

resources is the root cause of all scalability problems.

It is design phase decisions that have the greatest impact on scalability. As

the figure below shows, good design is literally the foundation for a highly-scalable

application:

As the scalability pyramid indicates, hardware, software and code tuning are

only a small part of the scalability solution. Design, which is at the base of

the pyramid, has the greatest influence on scalability. The ability to impact

scalability is decreased as you move up the scalability pyramid. Smart design

can add much more to an application's scalability than hardware.

Because the primary design goal for scalability is efficient resource

management, design is not limited to a specific component or tier of an

application. As a designer, you must consider scalability at all levels - from

the data store to the user interface. The following recommendations can be

helpful when designing for scalability.

- Do Not Wait

Synchronous methods are usually methods that need to wait to return a

result before continuing, or that need to verify that an action was

successful before continuing. That is, all actions associated with the

operation must fail or succeed before continuing with the operation.

Applications designed to use synchronous methods can negatively affect

scalability due to possible contention over resources. Other methods must

wait if a resource is already used by another method.

If a process/method is holding

on to a resource, then another process/method cannot access that resource.

A process/method should not wait longer than is necessary. To

alleviate this situation, use asynchronous processing. When operating

asynchronously, long-running operations are queued for completion later

by a separate thread.

For example, e-commerce sites need to perform credit card validation during

the checkout phase. In a high-volume site, credit card validation can become

a bottleneck if there is a difficulty in the validation process. This

process is a typical candidate for asynchronous processing - a separate

thread can send the credit card validation result (once available) back to

the client, while allowing other clients to proceed with their credit card

validation process.

- Do Not Fight For Resources

Because contention for resources is the root cause of all scalability

problems, it should be noted that insufficient memory, processor cycles,

network bandwidth or database connections will all lead to an application

that does not scale.

Regardless of design, all distributed applications possess a finite

amount of resources. Besides using asynchronous processing to release

resources as soon as possible, other steps can be used to avoid resource

contention. For example, scarce resources should only be acquired if necessary,

and if so, should be acquires as later as possible and released as early as

possible. For example, return database connections to the pool as soon as

possible, or if a transaction needs to lock data, then either lock a

specific row or lock the table for the shortest possible time.

- Design for Commutability

This is one of the most overlooked ways to reduce resource contention.

Two or more methods are called commutative if they can be applied in any

order and still obtain the same result. Operations that can perform in the absence

of transactions are likely candidates.

For example, a product promotion procedure might involve reducing its

price by 10% and then adding it to the list of products already in the

[Promotions] table. This procedure is commutative because it does not matter

whether your reduce price first, or added it to the [Promotions] table.

Scalability can be affected here by performing all such product promotions

on temporary tables and then updating the relevant permanent tables with the

net change in one transaction.

- Design for Interchangeability

General resources can be interchangeable. For example, if a database

connection is unique to a user, it cannot be pooled for use by other users.

In contrast, database connections that use a common-ser of credential can be

used by all users accessing the connection pool.

The concept of interchangeability supports the concept of moving state

out of your components. Requiring components to maintain state in between

method calls defeats interchangeability and ultimately scalability. Instead,

make each method call self-contained. Store state outside methods when it is

needed across method calls. A good place to keep state is the database. When

calling a method of a stateless component, any state required by that method

can either be passed in as a parameter or read from the database. At the end

of the call, preserve any state by passing it back to the method caller or storing

it in the database.

- Partition Resources and Activities

By minimizing relationships between resources and between activities,

you minimize the risk of creating bottlenecks resulting from one member of

the relationship taking longer than the other. Two resources that depend on

one another will live and die together.

Scalability testing is an extension of performance testing. Scalability

testing is about identifying major workloads and resolving bottlenecks that can

impede the application's scalability. As an application is scaled up and/or out,

comparison of performance test results against a baseline performance test

will indicate whether the application scales or not.

The following best practices are recommended for creating scalable

application.

- Follow Designing for Scalability

recommendation

- Use Clustering Technologies

Clustering services include network load balancing, clustering service, and

component load balancing (CLB). Network Load Balancing (NLB) acts as a

front-end cluster that distributes incoming IP traffic across a cluster of

up to 32 servers. NLB is an excellent technique for increasing scalability

and availability. Clustering service acts as a back-end cluster and provide

high-availability for applications such as database, messaging, and file

servers. CLB distributes workload across multiple servers running the site's

business logic.

- Consider Logical versus Physical Tiers

Logical separation should always be considered when designing distributed

application. The application should be logically partitioned across the user

interface layer, business logic layer, and data access layer. Logical

separation does not mandate physical separation but makes it possible.

Logically partitioning an application enables physical separation of layers,

which in turn leads to better scalability by scaling out the application

across different machines.

- Isolate Transactional Methods

Separate transactional methods from non-transactional methods. Placing

non-transactional methods in a component that requires transactions for its transactional

methods will negatively affect scalability because this component will

always incur the cost of a transaction even if the transaction was not

required.

- Eliminate Business Layer State When Possible

You can increase the scalability of a server-side layer by making it as

stateless as possible. A component in a server-side layer that must maintain

state between calls consumes resources. For example, a CAO (Client-Activated

Object) in .NET Remoting remains alive in between calls and as such can

maintain state until it is destroyed by the client. While the CAO is alive

it is consuming a thread-pool thread affecting the scalability of the .NET

Remoting thread pool. On the hand, an SAO (Server-Activated Object) in the

form of a Single Call object, is destroyed after call. After the SAO is

destroyed, it releases any resources it was holding including the

thread-pool thread.

- Review Best

Practices for Performance